This prototype was an intermediate result of a robot-human interaction project conducted at Torooc Inc., a VC-funded start-up company where I was a co-founder and Director of Software Development, in 2011. We started up this company through winning the first-place prize at the 2012 Start-up Competition by Seoul National University R&DB Foundation and Seoul Techno Holdings.

I first designed microphone amplifier circuits using transistors and amplifier chips, respectively. Then, I connected three microphones to Texas Instruments' Stellaris LM4F embedded board. On top of that, I implemented the Time-Difference of Arrival (TDOA) algorithm in C language. Specifically, (1) sound signals acquired through 3 microphones were transformed to frequency domain signals using Fast Fourier Transform (FFT); (2) the correlation values were calculated; and (3) finally the direction of sound source was determined based on the correlation values (a.k.a., sound source localization). The motors of the robot prototype were controlled using an Arduino board that was receiving the calculated values in real time from the Stellaris board. These two boards were separately used due to the limitation of Arduino's memory size and servo motors' electrical noises. The plastic body of the prototype was built using a 3D printer.

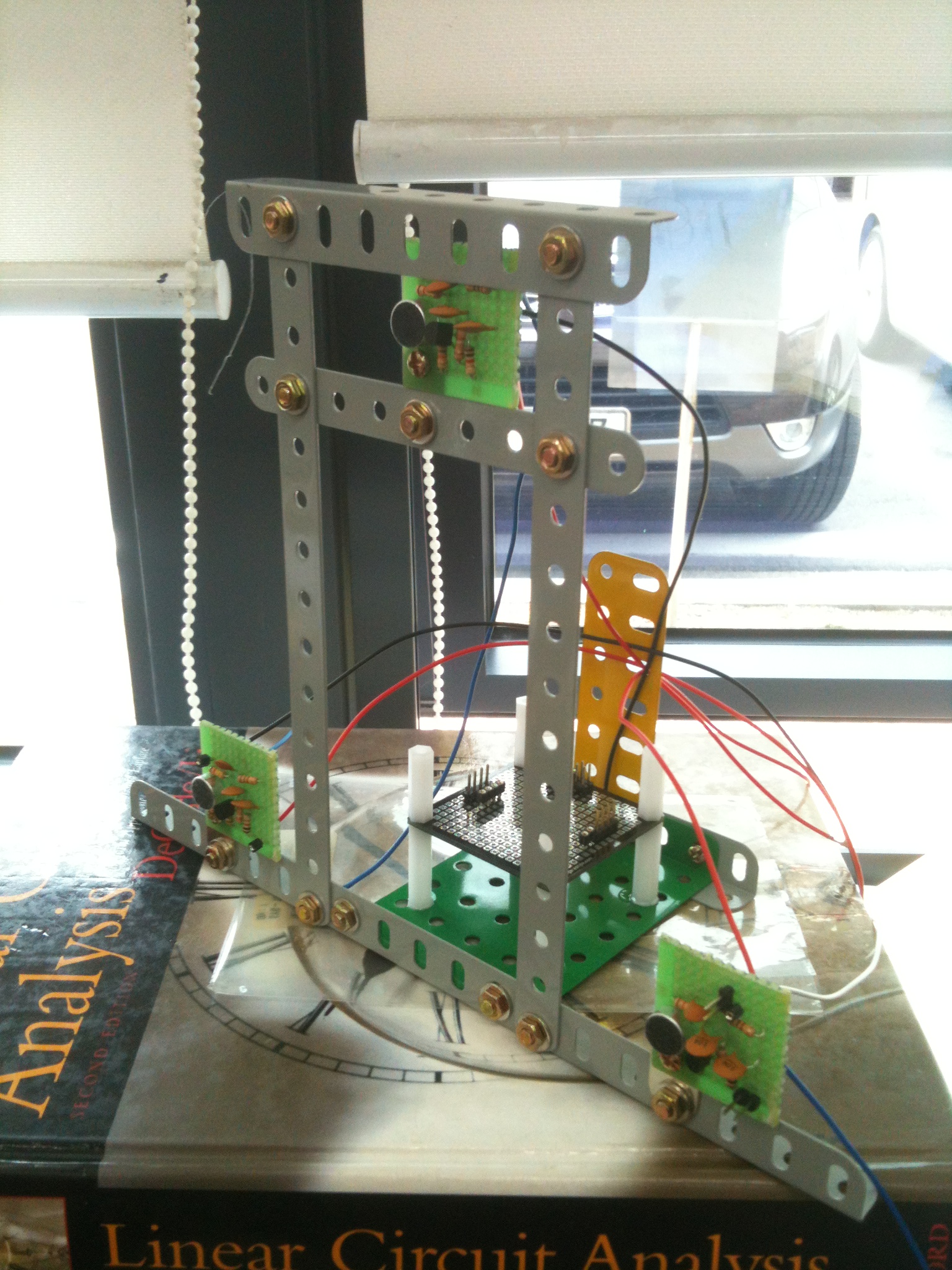

The picture below is a test bed that I built for testing the sound localization algorithm, before making the working mock-up.